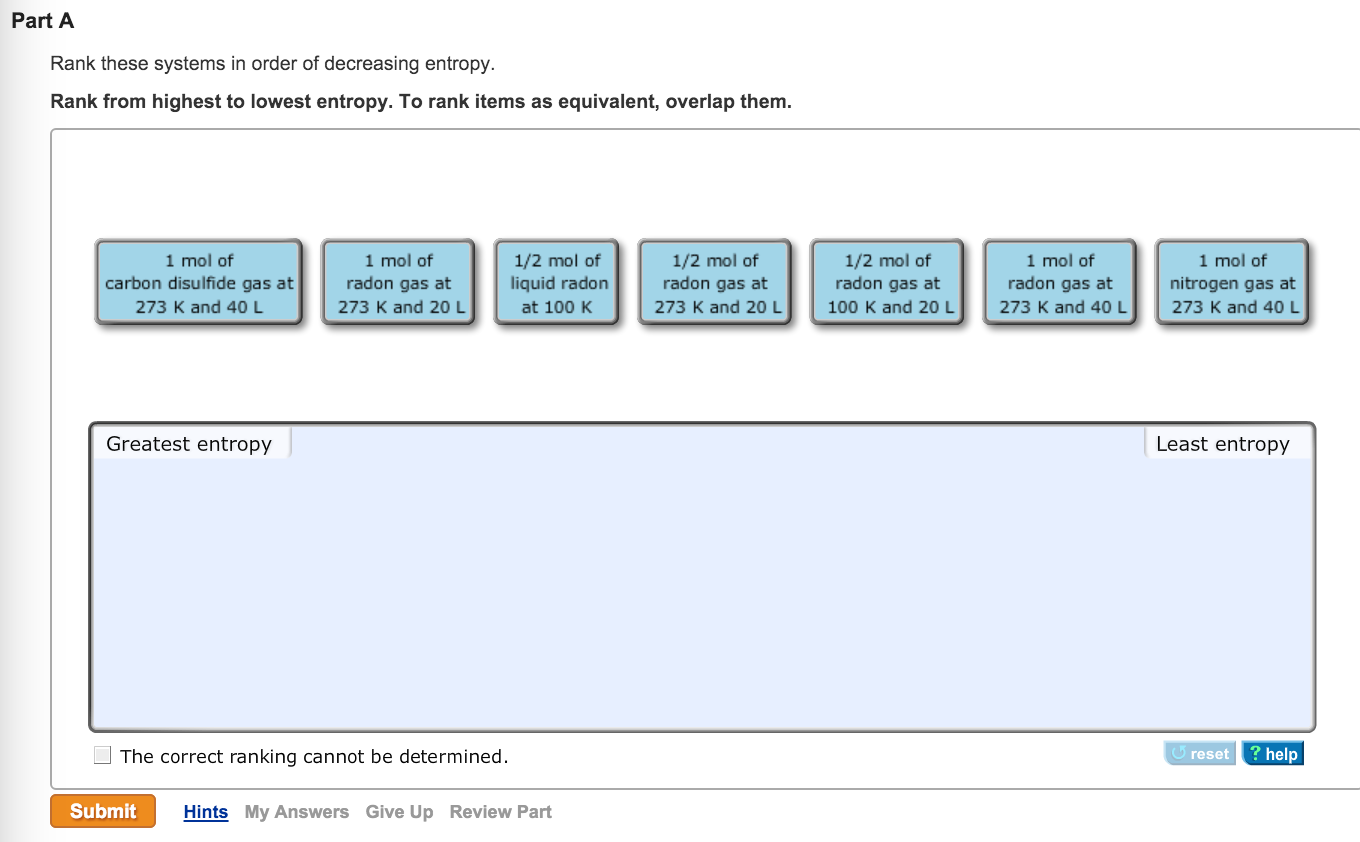

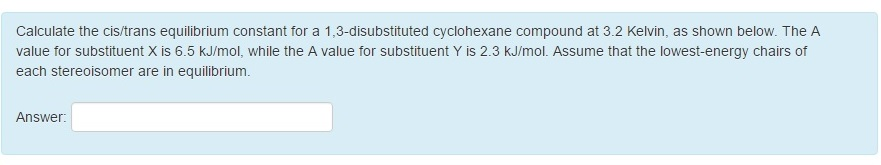

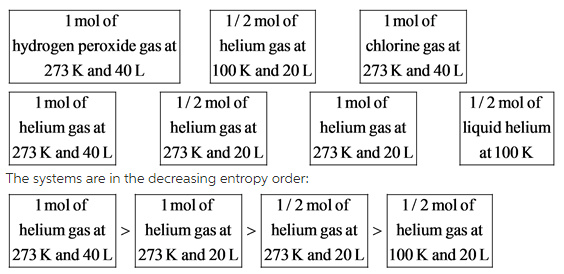

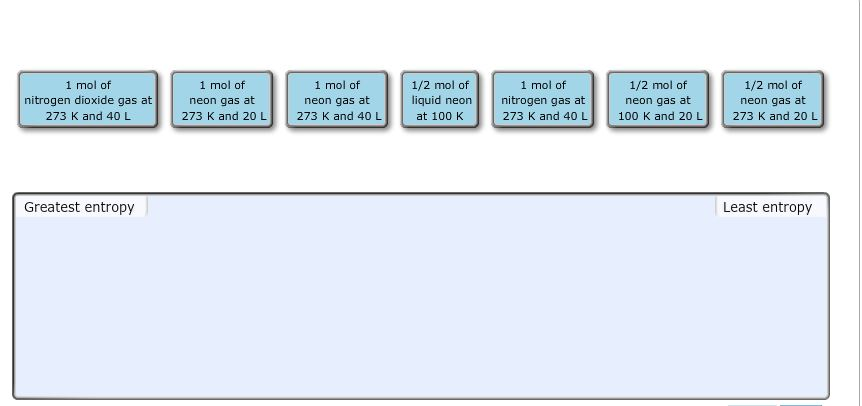

Selection by information content measures efficiently addresses problems, emerging from arbitrary thresh-holding, thus facilitating the full automation of the analysis.ĭata analysis of high-throughput technologies (microarrays, next generation sequencing) commonly predicates on the adoption of arbitrary p-value and fold change thresholds to define the reliability and relevance of a set of features, in order to partition the initial distribution into two sets. The feature lists are compact and rich in information, indicating phenotypic aspects specific to the tissue and biological phenomenon investigated. The methodology behaves consistently across different data types. Overall, the derived functional terms provide a systemic description highly compatible with the results of traditional statistical hypothesis testing techniques. Functional analysis through BioInfoMiner and EnrichR was used to evaluate the information potency of the resulting feature lists. Conclusions: Applying the proposed method on microarray (transcriptomic and DNA methylation) and RNAseq count data of varying sizes and noise presence, we observe robust convergence for the different parameterizations to stable cutoff points. Goal is a methodology of threshold-free identification of the differentially expressed features, which are highly informative about the phenomenon under study. We introduce the calculation of the RP entropy of the distribution, to isolate the features of interest by their contribution to its information content. Methods: Our work extends the rank product (RP) methodology with a neutral selection method of high information-extraction capacity. This work aims to propose a methodology, which automates and standardizes the statistical selection, through the utilization of established measures like that of entropy, already used in information retrieval from large biomedical datasets, thus departing from classical fixed-threshold based methods, relying in arbitrary p-value and fold change values as selection criteria, whose efficacy also depends on degree of conformity to parametric distributions. Such methods could adapt to different initial data distributions, contrary to statistical techniques, based on fixed thresholds. You are so important.Background: Here, we propose a threshold-free selection method for the identification of differentially expressed features based on robust, non-parametric statistics, ensuring independence from the statistical distribution properties and broad applicability. The order of the entropy in the decreasing order is the lowest order. The liquid point of the gas is more important than the gas's entropy. The last mole of liquid is 20 liter and the entropy will decrease as well. Is there a mole? The half mole of the gas increases and the half mole of the gas decreases as the temperature gets cooler.

It is in this 20 literoe 40 liter 1 mole of crypt with a greater entropy value. The 40 liter is the same as the 1 mole of the gas at 273kelvin and 20 liter. Next will be the mole of the gas at 273 and 40. There will be 1 mole of Florine gas at 273,kelvin and 40. We need to rearrange this in a different way. The order of the entropy is that 1 mole of 1 mole of hydrogen peroxide gas at 273, kelvin and 40 litres will have the highest.

If there is an increase in the volume and an increase in the temperature, the entropy increases. We can say that the moles of gas can be increased. The symbol of entropy is s, so if a guess is greater than the liquid, the guess's entropy is greater than that of gas. In the decreasing order, there are some terms that are related to the entropy. It is considered to be an extensive property of the matter that is expressed in terms of energy divided by temperature, and its unit is joule per kelvin. We know that the measure of the system's thermal energy per unit temperature is unavailable for doing useful work, so we are going to discuss about the measure of the system's thermal energy per unit temperature.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed